The Argument That Wasn’t About What You Think

I watched two engineers nearly wreck a working relationship over a database migration.

It was a backlog refinement session. Both engineers agreed the work was important. Both agreed on the timeline. Both agreed on the technology. What they could not agree on - and I mean could not, voices rising, chairs pushing back from the table - was whether to batch the migration or run it incrementally.

The Scrum Master tried facilitating. “Let’s hear both sides.” They’d heard both sides. Three times. Same impasse, except louder.

Here’s what I noticed: they weren’t actually disagreeing about batch versus incremental. They were disagreeing about something underneath - something neither had named. One engineer had watched a batch migration fail catastrophically at a previous company. The other had watched an incremental migration drag on for nine months and never finish. Each had selected a single traumatic experience, built a worldview on top of it, and was defending that worldview as though it were objective fact.

They weren’t arguing about this migration. They were arguing about different migrations, at different companies, in different years. And neither of them knew it.

That’s the Ladder of Inference in action.

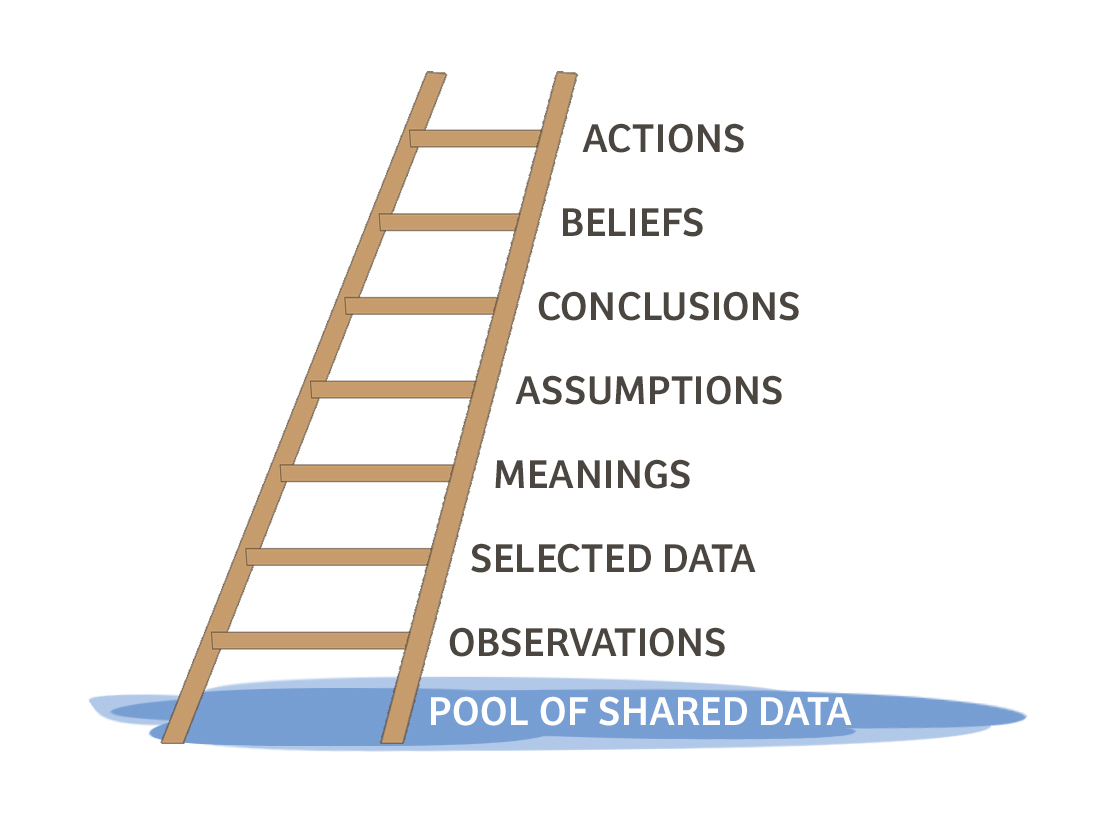

Chris Argyris, an organizational psychologist at Harvard, developed the Ladder of Inference as part of his broader work on organizational learning and defensive reasoning. He introduced it in Overcoming Organizational Defenses (1990), and Peter Senge brought it to a wider audience in The Fifth Discipline (1990) and the companion Fifth Discipline Fieldbook (1994), where Rick Ross wrote the definitive walkthrough. It’s one of the clearest tools I’ve found for understanding how smart, well-intentioned people can look at the same situation and arrive at completely different - and completely confident - conclusions.

Seven Rungs and a Reflexive Loop

The Ladder of Inference describes a mental process we all run, constantly, mostly without awareness. Seven rungs, from raw reality at the bottom to action at the top. The climb happens in milliseconds. By the time you’re aware you’ve reached a conclusion, you’ve already sprinted past six rungs without checking any of them.

Let’s walk up.

Rung 1: Observable Data and Experience

This is the ground floor - the unfiltered pool of everything that’s actually happening. What was said, word for word. What was done, motion by motion. The raw sensory input before your brain gets its hands on it.

In a meeting, this would be the full transcript - every word, every silence, every glance. It includes data that supports your emerging interpretation and data that contradicts it.

Nobody stays here. The pool is too vast to process in real time. Your brain has to select, and that selection is where the trouble starts.

Rung 2: Select Data

From the enormous pool of available data, you pick the pieces you pay attention to. This isn’t a conscious, deliberate act of curation - it’s automatic. Your brain highlights certain words, certain tones, certain facial expressions, and lets the rest fade into background noise.

This is the rung where most disagreements are actually born - not at the top of the ladder, where people are arguing about conclusions, but here, at the bottom, where they selected different slices of reality to build on.

In the migration argument, one engineer heard the phrase “run it all at once over the weekend” and his brain lit up like a fire alarm. The other engineer heard “roll it out gradually over six sprints” and felt a wave of dread. Same conversation. Different data selected. The rest of the ladder was downstream of that split.

(This is also why “let me play back what I heard you say” is one of the most powerful coaching moves in existence. It surfaces the selection.)

Rung 3: Add Meaning

Once you’ve selected your data, you interpret it. You assign meaning based on your personal and cultural context - your history, your training, your assumptions about how the world works.

“She didn’t make eye contact when she said that” is selected data. “She’s not being honest” is added meaning. The jump between those two is enormous, and it happens so fast that most people experience the meaning as the data. They don’t say “I noticed she looked away and I interpreted that as dishonesty.” They say “She was clearly lying.” The interpretation has fused with the observation.

This is where cultural and experiential differences create the biggest distortions. A developer who stays quiet during planning might mean “she’s disengaged” to one observer and “she’s processing carefully” to another. Same data, different meaning, both observers equally certain.

Rung 4: Make Assumptions

Based on the meaning you’ve added, you fill in the gaps. You project causes and motives onto the situation.

“She wasn’t honest about the timeline” becomes “She probably knows the project is behind and she’s hiding it.” You’ve moved from something you observed to something you invented - but it doesn’t feel invented. Given the meaning you assigned in Rung 3, this assumption follows naturally.

Assumptions are the invisible scaffolding of most workplace conflicts. People rarely state them out loud because they don’t experience them as assumptions. They experience them as obvious truths that anyone paying attention would share.

Rung 5: Draw Conclusions

Your assumptions crystallize into conclusions - definitive judgments about what’s happening and why.

“She’s hiding the fact that the project is behind” becomes “This project is in trouble and leadership doesn’t know.” The conclusion feels solid, earned, evidence-based. And in a narrow sense it is - there’s a chain of reasoning connecting it back to something you actually observed. But each link involved a choice you didn’t examine.

Here’s the thing about conclusions: they feel like the end of the thinking process, but they’re actually the middle. You don’t just conclude and stop. You conclude and then do something about it.

Rung 6: Adopt Beliefs

Conclusions that get reinforced over time harden into beliefs. “This project is in trouble” becomes “Projects led by people like her tend to be in trouble.” Or more broadly: “People in this organization hide bad news.”

Beliefs are the hardest rungs to revisit because they feel the most fundamental. They’re not presented as interpretations - they’re presented as lessons learned. “I’ve been around long enough to know how this works.”

(And here’s where it gets uncomfortable: beliefs aren’t always wrong. Sometimes you climb the ladder and you’re right. Which makes it even harder to question the process, because the outcome validated it - even though the process was still full of unchecked assumptions.)

Rung 7: Take Action

At the top of the ladder, you act on your beliefs. You send the email. You escalate to management. You push back in the meeting. You disengage from the project. You argue passionately for batch migration or incremental migration.

The action feels proportionate and justified because it rests on the entire structure below it - a structure you built in milliseconds and never inspected.

And then something remarkable happens. You fall off the top of the ladder and land right back at the bottom. Except now the pool of observable data looks different.

The Reflexive Loop

This is the part of the model that elevates it from “interesting cognitive diagram” to “genuinely unsettling insight into human behavior.”

Your beliefs influence which data you select the next time around. You’ve concluded that a colleague hides bad news. So the next time she gives a status update, your brain scans for signs of evasion. You notice the hesitation in her voice. You don’t notice that she voluntarily shared a risk she wasn’t asked about, because that doesn’t fit the belief you’ve already formed.

This is the mechanism behind confirmation bias, and Argyris identified it decades before that term entered popular psychology. The ladder doesn’t describe a one-time inference process. It describes a self-reinforcing cycle where each trip up makes the next trip faster, more automatic, and more resistant to correction.

In teams, the reflexive loop creates what Argyris called “defensive routines” - patterns of interaction designed to protect existing beliefs from challenge. A team that believes “leadership doesn’t listen to us” will selectively notice every instance where feedback is ignored and overlook every instance where it isn’t. The belief manufactures its own supporting evidence.

That’s what makes this model so valuable for coaching. It explains the mechanism by which reasonable people, looking at the same situation, construct entirely different realities - and defend those realities as though the alternative is irrational.

What This Changes About Your Coaching

Once you see the ladder, you can’t unsee it. Every heated meeting, every “but that’s not what happened” exchange, every retro item that triggers a twenty-minute debate - underneath, people are standing on different rungs arguing as though they’re on the same one.

Slowing Down the Climb

The single most useful coaching intervention from this model is teaching people to notice they’re climbing. Not to stop - that’s impossible - but to slow down enough to check the rungs.

In practice, this means building a habit of asking “What did I actually observe?” before moving to “What does it mean?” A team lead says, “The developers don’t care about quality.” I ask, “What did you see that led you there?” Usually: “Two bugs made it to production this sprint.” That’s selected data with added meaning. “They don’t care” is an inference stacked on top. There are fifteen other explanations that fit the same data.

The goal isn’t to prove anyone wrong. It’s to create a small gap between observation and conclusion - just enough space to ask, “Is there another way to read this?”

Walking People Down the Ladder

When two people are in conflict, they’re almost always arguing at the top of the ladder - competing conclusions, competing beliefs. Each person’s position feels airtight from the inside.

The move is to walk them back down. Not to the bottom - that feels patronizing. But down a few rungs. From conclusions to assumptions. From assumptions to meaning. From meaning to data.

“Can we take a step back? What specific things have each of you observed that led you to your position?”

This question surfaces the selected data - frequently revealing that two people are working from different subsets of reality. It reframes the disagreement from a clash of conclusions (personal, threatening) to a comparison of observations (collaborative, solvable).

I’ve watched this single move turn a fifteen-minute argument into a five-minute alignment conversation more times than I can count.

Teaching Teams the Vocabulary

You don’t need to give a lecture on Argyris to use this model. You just need to seed a few phrases into the team’s vocabulary.

“What rung are we on right now?” works surprisingly well. So does “I think we might be selecting different data.” Or the gentler version: “I’m noticing I have some assumptions baked in here - let me check them.”

When a team internalizes this vocabulary, the quality of their disagreements improves dramatically. They still disagree - that’s healthy - but they disagree at lower rungs, where the disagreements are resolvable. A disagreement about which data matters is solvable. A disagreement about whose worldview is correct is not.

Spotting the Reflexive Loop in Retrospectives

Retrospectives are where the reflexive loop is most visible - and most destructive.

A team that believes “the product owner doesn’t respect engineering time” will surface confirming retro items sprint after sprint. The PO added a story mid-sprint. Changed acceptance criteria. Scheduled a meeting during focus time. Each is real data. But the team overlooks the three times the PO pushed back on stakeholders to protect the sprint, or the email where she asked if the timing worked before scheduling.

Naming this pattern - gently - is one of the most impactful coaching moves available. “I notice we’ve surfaced five concerns about the PO in the last three retros. Has anything gone well in that relationship during the same period?” The question doesn’t accuse. It just widens the data pool. And widening the data pool is the antidote to the reflexive loop.

What It Looks Like in the Room

Darnell had been a Scrum Master at a state department of education for about eighteen months. His team built the data systems that school districts used to report student performance metrics to the state. Unglamorous work - form validations, data pipelines, compliance reports - but when the numbers were wrong, schools lost funding.

The team was six people: four developers, a business analyst named Claudia who’d spent fifteen years in the department before moving to IT, and a product owner named Jerome who’d come from the private sector two years earlier.

The problem was Claudia and Jerome.

They disagreed about everything. Not loudly - this was government, and government runs on professional courtesy - but persistently. Conversations would circle until someone glanced at the clock and suggested they “take it offline,” which meant it would resurface unchanged at the next refinement.

The current impasse was about data validation. Districts submitted student data through a web portal, and the existing system ran validations after submission - sometimes catching errors days later. Jerome wanted real-time checks as district staff typed, preventing bad data from ever entering the system.

Claudia thought this was a terrible idea.

From Darnell’s perspective, the disagreement made no sense. Real-time validation was objectively better for data quality. Jerome had user research showing late-cycle rejections were the number one complaint from district coordinators.

But Claudia kept saying “They’re not going to use it the way you think” and “You don’t understand how districts actually work.” Jerome kept responding with more data. The more data he presented, the more entrenched Claudia became.

After the third stalemate, Darnell asked them to try something. He drew a simple ladder on the whiteboard - seven rungs, bottom to top. No model name, no lecture. “I want to walk through how each of you got to your position. Not to prove anyone right - just to understand the path.”

He started with Jerome. “What’s the specific thing you observed that made you want to build this?”

Jerome pointed to the support data. “Forty-two percent of district support tickets last quarter were about validation rejections that came days after submission. Districts are doing double work. Real-time validation eliminates that entirely.”

Darnell mapped Jerome’s ladder on the board. Observable data: 42% of tickets are late rejections. Selected data: the volume and the rework pattern. Meaning: this is the biggest pain point. Assumption: fixing the entry experience means cleaner data. Conclusion: real-time validation is the right solution.

Then he turned to Claudia. “Same question. What did you observe?”

Claudia was quiet for a moment. Then she said something that reframed the entire conversation.

“I was a district data coordinator for eight years. Those people aren’t data entry specialists - they’re school secretaries, assistant principals, guidance counselors. They’re entering data between answering phones and handling parent complaints at the end of the day when they’re exhausted. The reason they submit incomplete data isn’t that they don’t see the errors. It’s that they can’t fix them yet because they don’t have the information. They enter what they have and plan to come back later.”

She paused. “If you put real-time validation on that form, you’re going to block them from saving partial work. They’ll hit a wall of red error messages on data they already know is incomplete, and they’ll call us furious. Or worse - they’ll go back to mailing spreadsheets.”

The room was quiet.

Darnell mapped Claudia’s ladder next to Jerome’s. Observable data: eight years watching district staff work. Selected data: the partial-entry workflow, the multitasking environment. Meaning: late validation accommodates how districts actually work. Assumption: blocking validation will break existing workflows. Conclusion: this feature will make things worse.

He stepped back. “You’re both right. You’re just starting from different data.”

Jerome had selected the support ticket data - quantitative, clear, compelling. Claudia had selected her lived experience in district offices - qualitative, contextual, equally compelling. Neither was wrong. But each had been arguing from their conclusion as if the other person’s data didn’t exist.

Jerome was quiet for a long moment. Then: “What if the validations were warnings instead of blockers? Flag the issues, let them save, but show them exactly what needs fixing before final submission.”

Claudia leaned forward. “That could work. If they can save partial data and come back to it - that’s how they already work. You’re just making the ‘come back to it’ part easier.”

In forty-five minutes, the team sketched out a validation model that neither of them would have designed alone. Non-blocking inline warnings during entry, with a summary dashboard showing outstanding issues per submission. Districts could work the way they already worked while seeing exactly what still needed attention before the deadline.

Two sprints later, validation-related support tickets dropped by sixty percent. Not because the system blocked bad data, but because it made incomplete data visible without punishing the people entering it.

At the retrospective, Claudia said something that stuck with Darnell. “Jerome had better data. I had better context. We needed both, but we spent three weeks acting like only one could be right.”

Darnell kept the ladder on the whiteboard. Over the following months, it became shorthand. When a refinement discussion started heating up, someone would say, “What rung are we on?” People still disagreed - but they caught the ladder climb faster. And catching it faster meant more time solving problems, less time defending positions.

That’s what the Ladder of Inference does. It doesn’t eliminate the climb - you can’t, it’s how brains work. But it makes the climb visible. And visible things can be examined, questioned, and occasionally climbed back down.

Go Deeper

-

Argyris, C. (1990). Overcoming Organizational Defenses: Facilitating Organizational Learning. Allyn & Bacon. The source. Argyris introduces the Ladder of Inference as part of his broader work on defensive routines and double-loop learning. Dense but essential.

-

Senge, P. (1990). The Fifth Discipline: The Art and Practice of the Learning Organization. Doubleday. The book that brought systems thinking - and the Ladder of Inference - to a mainstream management audience. Chapter 10 on mental models is where the ladder gets its fullest treatment.

-

Ross, R. (1994). “The Ladder of Inference.” In Senge, P. et al., The Fifth Discipline Fieldbook. Currency Doubleday. The most practical, step-by-step walkthrough of the model. If you only read one reference, read this chapter.

-

Argyris, C. (1991). “Teaching Smart People How to Learn.” Harvard Business Review, May-June 1991. Not specifically about the ladder, but Argyris’s most accessible article on why intelligent professionals are often the worst at examining their own reasoning. Pairs beautifully with the model.

-

Senge, P. et al. (2012). Schools That Learn: A Fifth Discipline Fieldbook for Educators, Parents, and Everyone Who Cares About Education. Crown Business. Updated applications of the Ladder of Inference in educational settings - useful if you coach in edtech or work with non-technical stakeholders.