The Most Confident Person in the Room

Early in my coaching career, I facilitated a sprint planning session where a developer - three weeks into his first agile project - confidently declared that the team’s estimation process was broken and he knew exactly how to fix it. He had a slide deck. He had metrics from a blog post. He had conviction that could bend steel.

The two senior engineers on the team exchanged a look I’ve since learned to recognize. It’s the look of someone who knows enough to know how much they don’t know - watching someone who doesn’t know enough to have that problem yet.

Here’s the thing. That junior developer wasn’t stupid. He wasn’t arrogant in any meaningful character sense. He was experiencing something psychologists identified formally in 1999, when David Dunning and Justin Kruger published their now-famous paper “Unskilled and Unaware of It: How Difficulties in Recognizing One’s Own Incompetence Lead to Inflated Self-Assessments” in the Journal of Personality and Social Psychology. The title alone is worth the price of admission.

Their core finding was deceptively simple: people who lack skill in a domain also lack the ability to recognize that they lack skill in that domain. The very expertise you need to evaluate your performance is the same expertise you’re missing. It’s a cognitive trap with no obvious exit - and if you coach teams for a living, you’re swimming in it daily.

The Model Everyone Draws Wrong

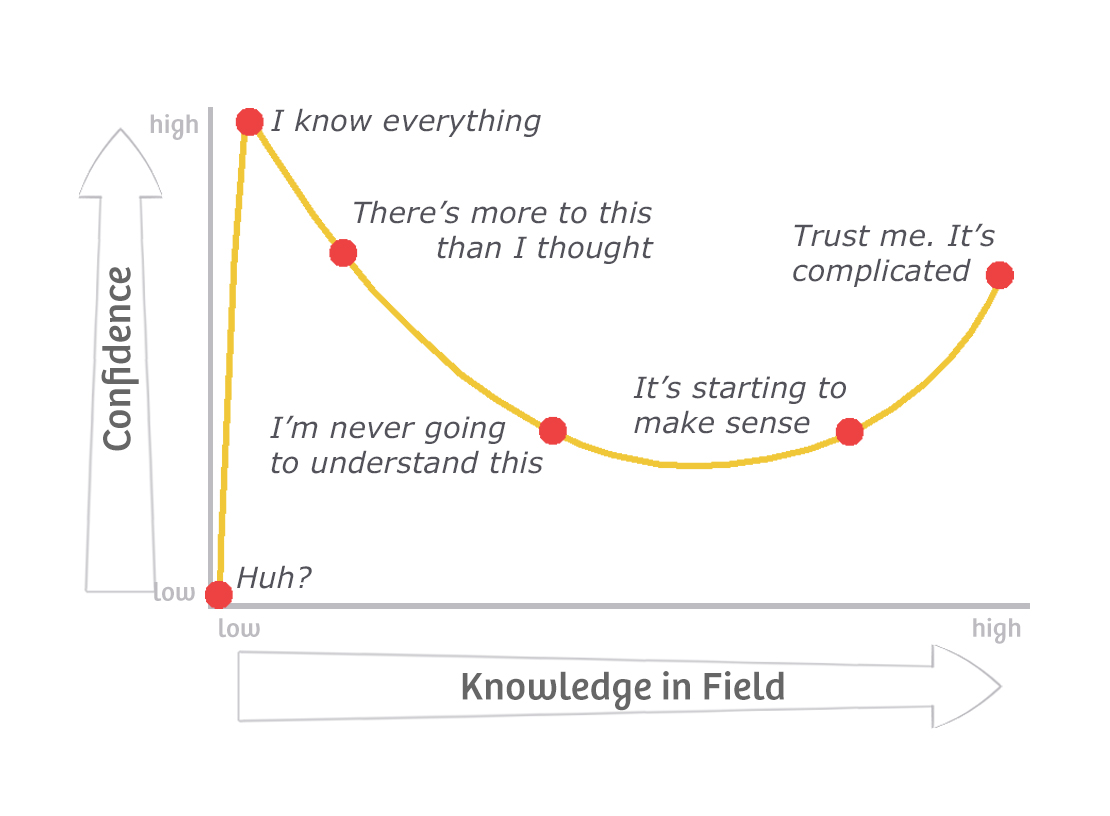

You’ve almost certainly seen the Dunning-Kruger chart - the swooping line that climbs a “Mount Stupid,” plunges into a “Valley of Despair,” then gradually ascends a “Slope of Enlightenment.” It’s everywhere. LinkedIn posts, conference slides, motivational posters in coworking spaces.

There’s just one problem. Dunning and Kruger never drew that chart.

The Original Study

The 1999 study was elegantly constructed. Dunning and Kruger tested Cornell undergraduates across three domains: logical reasoning, grammar, and humor. (Yes, humor. They asked people to rate how funny jokes were and compared their ratings to a panel of professional comedians. Science is wonderful sometimes.)

After each test, participants estimated how well they’d done relative to their peers. The pattern was consistent across all three domains.

Bottom-quartile performers estimated themselves around the 60th-70th percentile. They didn’t just think they were average - they thought they were above average. Top-quartile performers estimated themselves around the 70th-75th percentile - accurate-ish, but actually a slight underestimate of their real standing.

The data was presented as a simple bar chart. Four quartiles. Estimated vs. actual performance. No swooping curves. No Mount Stupid.

The study also included a clever fourth experiment. After bottom-quartile participants were given a short training in logical reasoning, their ability to assess their own earlier performance improved significantly. They could suddenly see what they’d been missing. That detail matters enormously for coaching - and we’ll come back to it.

The Four Patterns

The study identified four interlocking patterns that make the effect sticky:

Low performers significantly overestimate their ability. Not by a little. Bottom-quartile participants overestimated their test performance by roughly 40-50 percentile points. That's the headline finding, and it holds up across dozens of replications.

High performers slightly underestimate their ability. Top-quartile participants consistently rated themselves lower than their actual performance. Not dramatically - they still knew they were good. But they assumed others were closer to their level than reality suggested. (This is sometimes called the "false consensus" component.)

Low performers cannot recognize competence in others. When shown top-scoring tests and asked to re-evaluate their own performance, low performers didn't budge. They couldn't see what made the better answers better. The skill required to evaluate quality was the same skill they were missing.

Training improves self-assessment. When low performers were taught the relevant skills, their ability to recognize their previous deficits improved. This is the hopeful finding that coaches should keep in their back pocket. The effect is not permanent. Learning is the exit.

The Confidence-Competence Curve

That famous swooping chart - the one with Mount Stupid and the Valley of Despair - appears to have been created by the internet, not by researchers. It’s a folk interpretation that merges the Dunning-Kruger findings with the general learning curve concept. And while it captures something emotionally true about the experience of gaining expertise, it’s not what the data actually shows.

The real data is less dramatic but more useful. It shows a calibration gap between perceived and actual ability that narrows as competence increases. Beginners are poorly calibrated. Experts are well calibrated. The middle is messy. That’s it. That’s the finding.

The swoopy chart isn’t science - it’s vibes. Good vibes, arguably useful vibes, but vibes nonetheless.

That said, there’s a reason the folk version went viral and the actual bar chart didn’t. The swoopy curve tells a story that resonates with lived experience: the painful moment when you realize you know less than you thought, followed by the slow climb toward genuine competence. Most experts can point to their own Valley of Despair moment. It’s real as an experience - it’s just not what the 1999 data measured. As a coach, I’ll sometimes reference the general shape of the learning curve while being clear that the original research is about calibration gaps, not emotional stages. Intellectual honesty and practical usefulness don’t have to be enemies.

What the Effect Does NOT Say

This matters for coaches, so I’m going to be direct about it.

The Dunning-Kruger effect does not say “stupid people are confident.” It does not say confidence is inversely correlated with intelligence. It does not say experts are paralyzed by self-doubt. It does not say that if someone is confident, they’re probably wrong.

What it says is narrower and more precise: within a specific domain, people with low skill tend to overestimate their skill because they lack the metacognitive tools to evaluate their own performance accurately. That’s a statement about calibration, not character.

This distinction matters because the Dunning-Kruger effect has become internet shorthand for “that person who disagrees with me is an idiot who doesn’t know they’re an idiot.” That’s not science. That’s name-calling with a citation.

And there’s a legitimate methodological debate worth acknowledging. In 2020, researchers including Gilles Gignac and Marcin Zajenkowski published analyses suggesting that part of the Dunning-Kruger pattern is a statistical artifact - a natural consequence of how bounded scales and regression to the mean interact with noisy self-assessment data. The argument is technical but serious: when you ask people to estimate a bounded quantity, low performers will tend to overestimate and high performers will tend to underestimate even if there’s no cognitive bias at play. It’s just math.

Dunning himself has responded to these critiques, arguing that the statistical artifact explanation cannot account for the full pattern - particularly the finding that low performers fail to improve their self-assessment even after seeing superior work. The debate continues in the literature and hasn’t been fully resolved.

Here’s my coaching take: even if some portion of the measured effect is statistical noise, the core phenomenon is real enough that every experienced coach recognizes it instantly. Teams contain people at different skill levels who assess their own competence with wildly different accuracy. That’s not a hypothesis - it’s a Tuesday. The question is what you do about it.

What This Changes About Your Coaching

Estimation and Planning

If you’ve ever watched a team’s estimates improve as they gain experience together, you’ve seen the Dunning-Kruger dynamic play out in miniature. New teams tend to underestimate complexity - not because they’re lazy, but because they genuinely don’t know what they don’t know yet. The unknowns are invisible to them.

This is why velocity needs three to four sprints to stabilize. It’s not just about learning the codebase or the tooling. It’s about the team developing enough domain knowledge to accurately assess what’s hard. Before that threshold, their estimates reflect confidence more than competence.

Practical move: when a new team member confidently estimates a story as small, don’t dismiss it - but do ask, “What assumptions are baked into that estimate?” Often the assumptions reveal the knowledge gaps the estimate doesn’t account for.

I’ve also found Planning Poker to be a quiet Dunning-Kruger corrective. When a junior developer throws a 2 and everyone else throws an 8, the ensuing conversation isn’t about who’s right - it’s about what the junior developer hasn’t considered yet. The format makes the calibration gap visible without making it personal. Nobody’s wrong. Somebody just hasn’t seen the dragons in that part of the codebase yet. (There are always dragons.)

Peer Reviews and Feedback

The effect creates an asymmetry in peer feedback that coaches need to navigate carefully. Less experienced team members may struggle to give useful code reviews or design critiques - not because they don’t care, but because they literally cannot see what they’re missing. Meanwhile, highly skilled team members may assume their feedback is obvious and under-explain it.

Build structured review protocols. Checklists, templates, and explicit criteria don’t just catch defects - they scaffold the metacognitive skills that the Dunning-Kruger effect says low performers lack. A code review checklist that says “check for error handling” teaches a junior developer to look for something they might not have known to look for.

(This is also why pair programming is quietly one of the best Dunning-Kruger interventions available. You can’t overestimate your skill when you’re sitting next to someone who can see your screen.)

Self-Assessment and Growth

Most agile frameworks include some form of self-assessment - skill matrices, competency maps, IDP conversations. The Dunning-Kruger effect suggests these tools are least accurate for the people who need them most.

Don’t abandon self-assessment. Instead, calibrate it. Pair self-ratings with peer ratings. Use concrete behavioral anchors instead of abstract scales. “I can deploy to production independently” is more useful than “Rate your DevOps skills 1-5.” The more specific the anchor, the harder it is for the calibration gap to hide.

There’s a timing dimension here too. Self-assessments done before a challenging sprint tend to show wider calibration gaps than self-assessments done after. The experience itself is a teacher. If your team does quarterly skill reviews, consider scheduling them right after a particularly stretching piece of work - not during a quiet maintenance phase when everyone feels comfortable. Comfort breeds overestimation. Recent struggle breeds accuracy.

Coaching Leaders

Some of the most consequential Dunning-Kruger dynamics happen at the leadership level. A director who was promoted from engineering may have deep technical expertise but limited skill in organizational design - and may not recognize that gap because they’ve never needed to. They’ll approach a reorg with the same confidence they brought to system architecture, unaware that the domains require fundamentally different competencies.

When coaching leaders, I’ve found it helpful to ask domain-specific questions rather than general ones. Instead of “How confident are you in this plan?” try “What’s your experience with this specific type of change?” The first question invites overconfidence. The second invites honest reflection.

There’s also a structural move that helps. Encourage leaders to build teams that include people who know more than they do in specific areas - and then actually listen to those people. This sounds obvious, but the Dunning-Kruger effect predicts exactly why it’s hard: if a leader can’t fully appreciate the depth of expertise they lack, they may not recognize the value of the expert they hired. I’ve watched VPs override their own architects on technical decisions, not out of malice, but because they genuinely believed the problem was simpler than the architect was making it. The metacognitive gap doesn’t care about your title.

What It Looks Like in the Room

Marcus had been a Scrum Master at a mid-size retail company for about eighteen months - the kind of company where half the tech stack was inherited from an acquisition nobody fully understood and the other half was built by a contractor who left excellent documentation. (I’m kidding. The contractor left no documentation. They never do.)

His current team - five developers building an inventory management tool for the company’s warehouse operations - was mostly solid. Good communication, reasonable velocity, genuine care about quality.

The wrinkle was Devon.

Devon had joined the team two months ago, fresh from a bootcamp and radiating enthusiasm. He was smart, he worked hard, and he had opinions about everything. In his first refinement session, he’d suggested rewriting the API layer in a different framework. In his second, he’d proposed a new branching strategy. By the third, two of the senior developers had started going quiet during discussions.

Marcus noticed it in retro. Priya, the team’s most experienced developer, gave shorter answers than usual. When Devon suggested the team “wasn’t being innovative enough,” Priya said, “We’re fine,” and looked at her laptop.

After the retro, Marcus asked Priya for a coffee chat. She was direct. “Devon doesn’t know what he doesn’t know. He’s proposing changes to systems he doesn’t understand yet, and I’m tired of being the one to explain why his ideas won’t work. It feels like I’m either his teacher or the person who says no to everything.”

Marcus recognized the dynamic. Devon wasn’t being difficult - he was operating from a mental model that was too simple to reveal its own gaps. He genuinely believed the API rewrite was straightforward because he couldn’t see the complexity that Priya could see. And Priya, who could see all the complexity, was burning out on the emotional labor of constantly translating between what Devon thought was easy and what was actually involved.

Here’s what Marcus didn’t do: he didn’t pull Devon aside and say, “You’re experiencing the Dunning-Kruger effect.” (Nothing good has ever come from telling someone they’re Dunning-Krugering. Nothing. Ever. Don’t do it.)

Instead, he made two moves over the next sprint.

First, he restructured refinement. Instead of open discussion, he introduced a protocol where each story started with the most experienced person describing the technical landscape - the constraints, the dependencies, the things that had been tried before. Not as a lecture. As context-setting. Devon still contributed, but now he was contributing with more of the picture visible.

Second, he paired Devon with Priya for two days on a moderately complex story. Not as mentorship - as genuine pairing. Devon drove part of the time. He hit the complexity wall on day one, when a change he thought would take twenty minutes cascaded into four files he didn’t know existed. Priya didn’t say “I told you so.” She said, “Yeah, that’s the part that isn’t obvious until you’re in it.”

By the end of the second day, Devon’s estimates for similar work had shifted noticeably. Not because anyone told him he was wrong - but because he’d developed enough firsthand experience to see what he’d been missing. The calibration gap closed through learning, exactly the way Dunning and Kruger’s research predicted it would.

At the next retro, Priya talked more. Devon asked more questions and made fewer proposals. Marcus didn’t mention cognitive biases or psychology papers. He just built a team structure where accurate self-assessment could develop naturally.

(And six months later, Devon was the one patiently explaining system complexity to the next new hire. He even used the phrase “that’s the part that isn’t obvious until you’re in it” - word for word. The cycle continues, and honestly, that’s kind of beautiful.)

Go Deeper

-

Kruger, J. & Dunning, D. (1999). “Unskilled and Unaware of It: How Difficulties in Recognizing One’s Own Incompetence Lead to Inflated Self-Assessments.” Journal of Personality and Social Psychology, 77(6), 1121-1134. The original paper. Readable, well-constructed, and shorter than you’d expect.

-

Dunning, D. (2011). “The Dunning-Kruger Effect: On Being Ignorant of One’s Own Ignorance.” Advances in Experimental Social Psychology, Vol. 44, 247-296. Dunning’s own retrospective on a decade of follow-up research. More nuanced than the original and addresses early criticisms.

-

Gignac, G.E. & Zajenkowski, M. (2020). “The Dunning-Kruger effect is (mostly) a statistical artefact: Valid approaches to testing the hypothesis with individual differences data.” Intelligence, 80, 101449. The strongest methodological critique. Worth reading to understand the debate honestly.

-

Dunning, D. (2012). Self-Insight: Roadblocks and Detours on the Path to Knowing Thyself. Psychology Press. A broader look at self-assessment failures beyond the original effect. Useful for coaches who want the full picture of why people misjudge their own abilities.

-

Schlösser, T. et al. (2013). “How Unaware Are the Unskilled? Empirical Tests of the ‘Signal Extraction’ Counter-Explanation for the Dunning-Kruger Effect in Self-Evaluation of Performance.” Journal of Economic Psychology, 39, 85-100. A direct test of the statistical artifact hypothesis that supports the original cognitive bias explanation.