The Estimate That Wrote Itself

A few years ago, I was coaching a team that had just finished a truly brutal sprint. They’d committed to twelve stories, completed four, and carried eight into the next sprint. Again. Third time in a row.

During the retro, I asked a simple question: “When you estimated those stories in planning, how long did you actually spend thinking about each one?”

Silence. Then the tech lead laughed. “Maybe fifteen seconds? We just sort of… knew.”

They didn’t know. They felt like they knew. And that feeling - that instant, confident, automatic sense of certainty - is exactly what Daniel Kahneman spent forty years studying.

Kahneman is a psychologist who won the 2002 Nobel Prize in Economics, which tells you something about how far his work reaches. (A psychologist winning the economics Nobel is like a drummer winning a guitar competition. It shouldn’t work, but the performance was undeniable.) His research, conducted primarily with his longtime collaborator Amos Tversky starting in the early 1970s, fundamentally changed how we understand human judgment and decision-making. Their landmark 1974 paper in Science - “Judgment Under Uncertainty: Heuristics and Biases” - kicked open a door that hasn’t closed since.

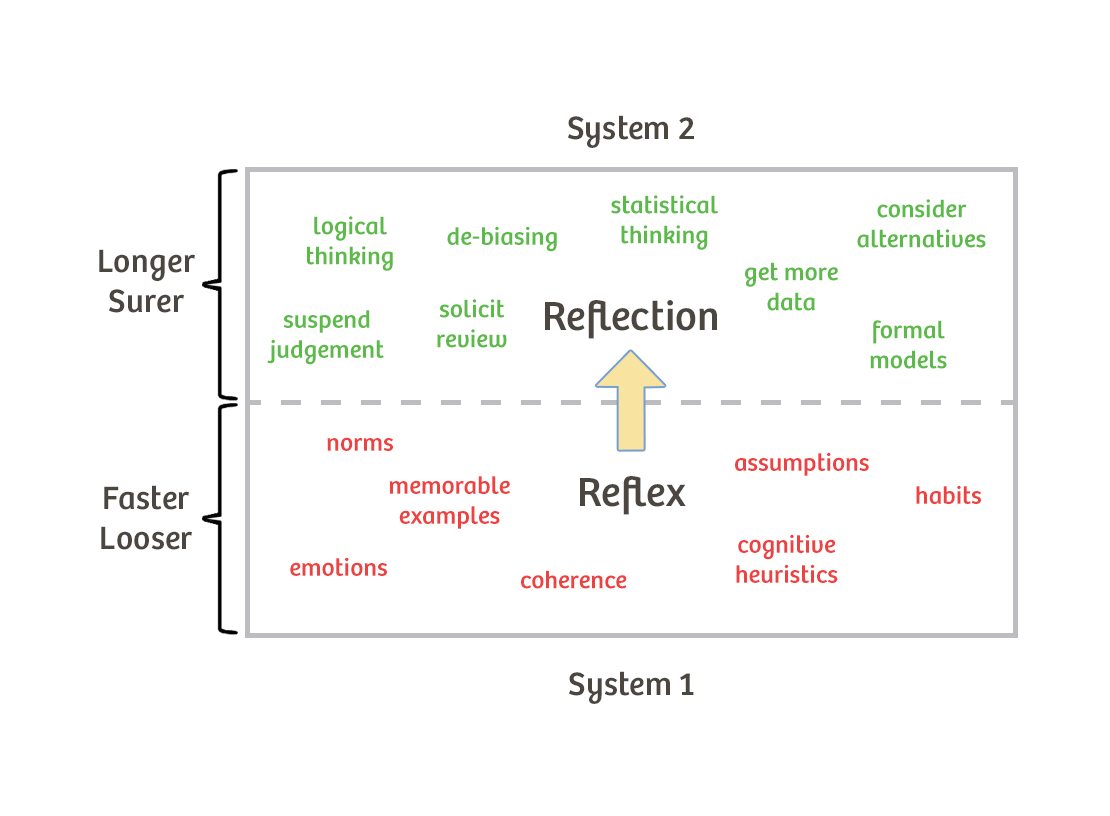

In 2011, Kahneman distilled decades of findings into Thinking, Fast and Slow, a book that became a bestseller and gave the world a deceptively simple model: your brain runs two systems. One is fast, intuitive, and effortless. The other is slow, analytical, and lazy. And the fast one is running the show far more often than you think.

For agile coaches, this isn’t just interesting psychology. It’s the operating manual for half the dysfunctions you encounter in sprint planning, retrospectives, and product decisions.

Two Systems, One Overworked Brain

Kahneman describes human cognition as a collaboration - sometimes a conflict - between two mental systems. He deliberately gave them bland names to avoid implying that one is better than the other. They’re different tools for different jobs.

System 1: Fast, Automatic, Effortless

System 1 is the part of your brain that just goes. It reads facial expressions, completes the phrase “bread and ___,” dodges a pothole while you’re thinking about dinner, and produces a gut feeling about whether a story is a 3 or an 8 before anyone has finished reading the acceptance criteria.

It operates automatically, with little or no effort and no sense of voluntary control. It’s pattern-matching at speed. It draws on experience, association, and emotion to produce impressions, feelings, and quick judgments.

System 1 is extraordinarily good at what it does. It keeps you alive in traffic. It lets experienced developers spot a code smell in seconds. It’s why a seasoned Scrum Master can walk into a standup and sense that something is off before anyone says a word.

The catch is that System 1 doesn’t know its own limits. It generates answers to questions - even when the question is harder than it realizes. When System 1 can’t find a quick answer to the real question, it quietly substitutes an easier question and answers that one instead. You asked, “How long will this integration take?” System 1 answered, “How hard does this feel compared to the last integration?” - and served up that answer as if it were the same thing.

System 2: Slow, Deliberate, Effortful

System 2 is the conscious, reasoning, calculating part of your brain. It handles complex arithmetic, careful comparisons, logical deduction, and anything that requires you to hold multiple variables in working memory at once.

Here’s the thing about System 2: it’s lazy. Not broken - lazy. Engaging System 2 takes genuine effort, and your brain is hardwired to conserve effort whenever possible. System 2 will happily accept whatever System 1 offers unless something triggers it to engage - a surprise, a detected error, a deliberate decision to focus.

This means that in most day-to-day situations, System 1 proposes and System 2 endorses. The fast brain offers an answer, the slow brain shrugs and says “sure, sounds right,” and you move on. In many cases this works fine. In estimation meetings, it’s a disaster factory.

The Biases That Matter Most in Coaching

Kahneman and Tversky catalogued dozens of cognitive biases - systematic errors that System 1 produces reliably and predictably. Not all of them are equally relevant to agile coaching, but several show up in team rooms with clockwork regularity.

Anchoring. The first number mentioned in any discussion disproportionately influences all subsequent numbers. In planning poker, if someone says "I think this is about a five" before the cards are revealed, every estimate in the room just shifted toward five. This is why simultaneous reveal exists - and why it matters more than most teams realize.

The Planning Fallacy. People consistently underestimate how long tasks will take, even when they have direct experience with similar tasks taking longer than expected. Kahneman and Tversky showed that people plan based on best-case scenarios by default. System 1 constructs an optimistic narrative ("we'll build it, integrate it, test it, ship it") and conveniently edits out the interruptions, blockers, and rework that always show up. This is not a character flaw. It's a feature of how human brains construct stories about the future.

The Availability Heuristic. People judge the probability of events based on how easily examples come to mind. If the last deploy went smoothly, the team estimates the next one will too - regardless of how many deploys have gone sideways in the last year. Recent, vivid, emotionally charged events get overweighted. Quiet, uneventful sprints get forgotten.

Loss Aversion. People feel the pain of losing something roughly twice as strongly as the pleasure of gaining the same thing. In practice, this means teams resist abandoning a feature they've invested weeks in - even when the evidence says it's the wrong feature. The sunk cost isn't rational, but the emotional weight of "losing" that investment is very real. It's also why killing a bad initiative in sprint review feels so much harder than it should.

WYSIATI (What You See Is All There Is). System 1 builds the most coherent story it can from whatever information is available - and doesn't bother checking whether important information is missing. A team refining a story based on the PO's verbal description builds a mental model that feels complete, even when critical details haven't been discussed. The confidence is real. The completeness is an illusion.

What Makes This More Than Pop Psychology

Here’s where intellectual honesty matters. Some specific findings from behavioral economics - including a few that appeared in Thinking, Fast and Slow - have faced challenges in the replication crisis. The most notable is ego depletion (the idea that willpower is a finite resource that gets “used up”), which has not replicated well in large-scale studies. Some priming effects Kahneman cited have also proven difficult to reproduce.

Kahneman himself acknowledged these issues publicly, which is worth noting. In a widely shared letter to priming researchers, he called the state of the evidence “threatening” and urged more rigorous methodology.

But here’s what hasn’t been challenged: the core dual-process framework and the major biases - anchoring, planning fallacy, loss aversion, availability heuristic - have replicated robustly across cultures and decades. Prospect theory, the formal model Kahneman and Tversky published in 1979, remains one of the most cited papers in economics. The Nobel committee didn’t make a mistake.

So when you bring this into a coaching context, you’re standing on solid ground. The specific mechanisms are well-established. The practical implications are real. Just don’t lean on ego depletion in your next workshop, and you’ll be fine. (Because nothing undermines credibility faster than citing a finding that your team’s one research-minded engineer debunked over lunch.)

What This Changes About Your Coaching

Estimation and Planning

The planning fallacy is probably the single most useful concept in this entire book for agile practitioners. It explains why teams systematically underestimate - not because they’re bad at estimating, but because their brains are wired to construct optimistic narratives.

The fix isn’t to tell people to “estimate more carefully.” That’s like telling someone to “be taller.” The fix is to change the estimation process so that System 2 actually engages.

Techniques that work: reference class forecasting (comparing the current story to actual data from similar past stories, not to how the team remembers those stories going), simultaneous reveal in planning poker (defeats anchoring), and explicit pre-mortems (“imagine this story took three times longer than expected - what went wrong?”). Each of these is a System 2 activation technique. They force the slow brain to check the fast brain’s homework.

Historical velocity is, in Kahneman’s terms, the “outside view” - it tells you what actually happens to teams like yours, regardless of the optimistic story your inside view is constructing. This is why velocity-based forecasting works better than gut-feel estimation. Not because the math is fancy, but because it routes around the planning fallacy.

Retrospectives

Retrospectives live or die on the quality of the team’s collective memory - and memory is exactly where System 1 does some of its most creative editing.

The availability heuristic means that whatever happened most recently, most dramatically, or most emotionally will dominate the retro conversation. The quiet improvement that unfolded steadily over three weeks? Invisible. The production incident on Thursday? That’s all anyone can talk about.

Coaches can counter this by externalizing memory. Sprint burndown charts, commit logs, incident timelines, customer feedback received during the sprint - lay out the data before asking “what went well?” This gives System 2 something to work with instead of letting System 1 reconstruct a narrative from emotional highlights.

There’s another retro trap worth naming. When teams discuss what went wrong, anchoring kicks in hard. The first person to offer a root cause sets the frame for the entire conversation. If a senior developer says “the deploy pipeline was the bottleneck,” everyone else’s analysis tilts toward the pipeline - even if the real issue was unclear requirements or a missing integration test. Try having people write their observations silently before discussing. It’s the retro equivalent of simultaneous reveal in planning poker, and it works for the same reason.

Decision-Making

WYSIATI is a trap that swallows product decisions whole. When a team or PO makes a decision based on the information in front of them - without asking what information might be missing - they’re building a confident story on incomplete evidence.

A simple coaching intervention: before any significant decision in refinement or sprint review, ask “What would we need to know that we don’t currently know?” This question is almost annoyingly simple. It is also remarkably effective at puncturing the false completeness that System 1 provides.

Anchoring plays a role here too. When a PO presents a solution they’ve already sketched out, the team’s analysis anchors to that solution. Alternative approaches get evaluated as deviations from the anchor rather than as independent options. I’ve found that asking the team to generate two or three possible approaches before the PO shares their preferred one produces dramatically better refinement conversations. It’s a small procedural change that gives System 2 room to operate before System 1 locks in on the first plausible answer.

Product Development

Loss aversion explains why organizations struggle to kill features, sunset products, or pivot strategies - even when the data screams for it. The investment already made looms larger than the value yet to be captured.

I’ve watched teams spend an entire sprint polishing a feature that analytics showed nobody was using. When I asked why, the answer was always some version of “we’ve already put so much work into it.” That’s loss aversion wearing a project management costume.

Coaches can help by reframing the question. Instead of “should we abandon this feature?” try “if we were starting fresh today with no prior investment, would we build this?” The second question strips out the sunk cost and lets System 2 evaluate the actual merits.

Loss aversion also shows up in how teams handle technical debt. Refactoring means temporarily “losing” velocity on visible features in exchange for future gains in maintainability. That trade feels terrible to System 1 - the loss is concrete and immediate, the gain is abstract and deferred. This is why technical debt conversations benefit enormously from data. Show the team how many hours they lost last quarter to workarounds caused by the debt. Make the invisible cost visible, and you shift the framing from “losing sprint capacity” to “recovering sprint capacity.” Same facts, different frame, completely different emotional response.

What It Looks Like in the Room

Dana had been coaching teams at a regional logistics company for about eight months. Three cross-functional teams, all working on the same warehouse management platform, all sharing a single product owner named Terrence who was stretched thinner than he’d ever admit.

The pattern had become predictable. Every two weeks at sprint planning, Terrence would walk through the priorities, the teams would estimate, and the commitments would land somewhere between ambitious and delusional. Then the sprint would unfold the way sprints unfold - interruptions, production support tickets, a dependency nobody flagged - and the teams would deliver about sixty percent of what they’d planned.

Dana had tried the usual coaching moves. She’d facilitated conversations about sustainable pace. She’d helped the teams track their velocity and compare it to their commitments. The data was right there on the wall: average velocity of 34 points, average commitment of 52 points. The gap was visible to anyone who looked.

Nobody looked. Or rather, they looked and then planned as if the data didn’t apply to them.

After reading Kahneman, Dana recognized the pattern for what it was. The teams weren’t ignoring their velocity data out of stubbornness. They were running on System 1. Every planning session, the team would hear Terrence describe a story, mentally simulate the work (“we’ll set up the API endpoint, wire it to the existing service, write the tests, done”), and produce an estimate based on that optimistic simulation. The planning fallacy was running the meeting.

At the next planning session, Dana tried something different. Before any estimation began, she put three sprints of actual cycle time data on the screen - not velocity, but the literal number of days each story had taken from “in progress” to “done.”

“Before we estimate anything today,” she said, “let’s just look at the last thirty stories we completed and how long they actually took.”

The room got quiet. Stories the teams remembered as quick wins had taken four or five days. Stories they’d estimated at three points had taken the same elapsed time as stories estimated at eight. The data didn’t match the narrative.

Then she introduced one more step. After the team estimated each story with planning poker, she asked: “Now look at the three most similar stories from last sprint. What did those actually take?” She wasn’t asking them to change their estimates. She was asking them to check their System 1 output against System 2 data.

The effect was immediate. Not dramatic - immediate. Estimates shifted. Not by a lot, but consistently in the direction of realism. One developer said, “I was about to say three, but the last time we did something like this it took us a week and a half.” Another said, “I think my gut is lying to me.”

(That second comment became a team catchphrase. “Is your gut lying to you?” showed up on a sticky note above the planning board and stayed there for months.)

Over the next four sprints, the commitment-to-delivery gap narrowed from 40% to under 15%. The teams weren’t working faster. They were planning more honestly. And Terrence - who had spent months frustrated by missed commitments - started trusting the team’s estimates enough to make realistic promises to stakeholders.

At a retro six weeks later, one of the senior developers summed it up: “We didn’t get better at building software. We got better at knowing what we don’t know.”

Dana smiled. That was System 2 talking.

Here’s the part that stuck with her most, though. Six months later, she introduced the same technique to a different team at the same company. It took them exactly one sprint to adopt it. The catchphrase migrated. “Is your gut lying to you?” appeared on a second team’s board within a week.

Good frameworks spread like that. Not because someone mandates them, but because they name something people already suspected was true - and give them permission to act on it.

Go Deeper

-

Kahneman, D. (2011). Thinking, Fast and Slow. Farrar, Straus and Giroux. The definitive popular treatment. Dense but readable - plan to underline a lot.

-

Tversky, A. & Kahneman, D. (1974). “Judgment Under Uncertainty: Heuristics and Biases.” Science, 185(4157), 1124-1131. The original paper that launched the field. Still cited constantly, still worth reading directly.

-

Kahneman, D. & Tversky, A. (1979). “Prospect Theory: An Analysis of Decision Under Risk.” Econometrica, 47(2), 263-291. The formal model behind loss aversion. One of the most cited papers in economics - and the core of Kahneman’s Nobel Prize work.

-

Lewis, M. (2016). The Undoing Project: A Friendship That Changed Our Minds. W.W. Norton. Michael Lewis tells the story of the Kahneman-Tversky collaboration. If the science feels dry, start here - it reads like a novel.

-

Buehler, R., Griffin, D. & Ross, M. (1994). “Exploring the ‘Planning Fallacy’: Why People Underestimate Their Task Completion Times.” Journal of Personality and Social Psychology, 67(3), 366-381. The deep dive on the planning fallacy specifically - directly applicable to anyone coaching estimation practices.